Windows Azure Storage is a cloud solution that provides customers to store seamlessly limitless amount of data for a any duration of time. It has been in production since November 2008. It is used in storing application data, Live Streaming, social networking search, gaming and music content, etc.,

Once you have your data stored in Azure storage, You can access your data any time and from anywhere. And you only pay for what you use and store. Currently we have thousands of customers who are already using Azure Storage Services.

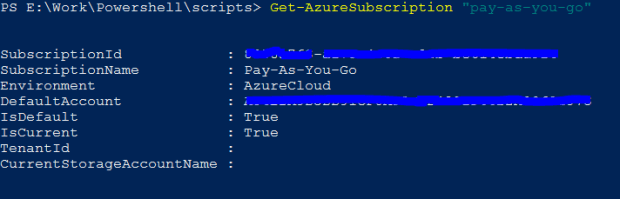

Visit the Azure Portal to create your free subscription and try out Azure Storage. Also, check out the Microsoft article for a jump start and pre-requisites required to use the Azure Storage.

Why use Azure Storage?

Disaster Recovery: Azure Storage stores your data miles apart (minimum 400 miles) in different data centres. This provides a strong guard against natural calamities like earthquakes, tornadoes etc., Replication options like – LRS, ZRS, GRS, RA-GRS are provided, which can be chosen as per the business needs.

Multi Tenancy: As with other services, Azure storage uses the concept of shared tenancy. What this means is, to reduce the storage cost, depending on the varying work loads of the customer, data from multiple customers are served from the same storage infrastructure. This reduces the amount of storage space to be provisioned at a time than having each services run on their own dedicated hardware.

Global Name-space: For ease of use, Azure Storage implements a Global Namespace that allows the data to be stored and accessed in a consistent manner from any location in the world.

Global Partitioned Name-space:

Exabytes of data and beyond are stored in Azure Storage. Azure had to come up with a solution that allowed its clients to store and retrieve data without much of a hassle. To provide this capability, Azure leveraged DNS part of the storage name-space and break it down to three parts: Account Name, a Partition Name and an Object Name.

Syntax: http(s)://AccountName.<service>.core.windows.net/PartitionName/ObjectName

Account Name: This is the customer selected Storage Account Name (entered while creating the storage account– Azure portal or Azure Powershell). This Account Name is used to locate the primary storage cluster and the data centre where the requested data is stored. This primary location is where the preceding requests go to reach the data of that account.

Partition Name: This name locates the data once the request reaches the primary cluster. It is also used to scale out the access to data across the nodes depending on the traffic.

Object Name: This identifies the individual objects within that partition.

For Blobs, the full blob name is the PartitionName.

For Tables, each entity (row) in the table has a primary key that consists of two properties: the PartitionName and the ObjectName. This distinction allows applications using Tables to group rows into the same partition to perform atomic transactions across them.

For Queues, the queue name is the PartitionName and each message has an ObjectName to uniquely identify it within the queue.

Architectural Components:

Storage Stamp: This is a cluster of N racks of storage nodes. Each rack is built out as a separate fault domain with redundant networking and power. The goal is to keep the stamp around 70% utilized in terms of capacity, transitions and bandwidth. This is because ~20% is kept as a reserve for (a) disk short stroking to gain better seek timse and higher throughput by utilizing the outer tracks of the disks and (b) to continue providing storage capacity and availability in the presence of a rack failure within a stamp. When the storage stamp reaches 70% utilization, the location service migrates accounts to different stamps using Inter-Stamp replication.

Location Service: Manages all the storage stamps. Also responsible for managing the account name-spaces across all stamps. The LS itself is distributed across two geographical locations for its own disaster recovery.

Azure Storage provides storage from multiple locations. Each location is a data centre, which holds multiple storage stamp. To provision additional capacity, the LS has the ability to add new regions, new locations to regions and new stamps to locations. The LS can then allocate new storage accounts to those new stamps for customers as well as load balance (migrate) existing storage accounts from older stamps to new stamps.

As shown in the figure, When an application requests new Storage Account for storing data, it specifies the location affinity for the storage (Example: US North). The LS then chooses a storage stamp within that location as the primary stamp for the account. The LS then stores the account meta-data information in the chosen storage stamp, which tells the stamp to start taking traffic for the assigned account. The LS then updates the DNS to allow requests to now route from the name https://AccountName.service.core.windows.net/ to that storage stamp’s virtual IP (VIP, an IP address the storage stamp exposes for external traffic).

Three Layers within Storage Stamp:

Stream Layer: This layer stores the bits on disk and is in charge of distributing and replicating the data across many servers to keep data durable within a storage stamp. The stream layer can be thought of as a distributed file system layer within a stamp. It

understands files, called “streams”, how to store them, how to replicate them, etc., but it does not understand higher level object constructs or their semantics.

Partition Layer: The partition layer is built for (a) managing and understanding higher level data abstractions (Blob, Table, Queue), (b) providing a scalable object namespace, (c) providing transaction ordering and strong consistency for objects, (d) storing object data on top of the stream layer, and (e) caching object data to reduce disk I/O.

Front End Layer: The Front-End (FE) layer consists of a set of stateless servers that take incoming requests. Upon receiving a request, an FE looks up the AccountName, authenticates and authorizes the request, then routes the request to a partition server in the partition layer (based on the PartitionName). The system maintains a Partition Map that keeps track of the PartitionName ranges and which partition server is serving which PartitionNames. The FE servers cache the Partition Map and use it to determine which partition server to forward each request to. The FE servers also stream large objects directly from the stream layer and cache frequently accessed data for efficiency.

Two Replication Engines:

Intra-Stamp Replication (Stream Layer): This system provides synchronous replication and is focused on making sure all the data written into a stamp is kept durable within that stamp. It keeps enough replicas of the data across different nodes in different fault domains to keep data durable within the stamp in the face of disk, node, and rack failures. Intra-stamp replication is done completely by the stream layer and is on the critical path of the customer’s write requests. Once a transaction has been replicated successfully with intra-stamp replication, success can be returned back to the customer.

Inter-Stamp Replication (Partition Layer): This system provides asynchronous replication and is focused on replicating data across stamps. Inter-stamp replication is done in the background and is off the critical path of the customer’s request. This replication is at the object level, where either the whole object is replicated or recent delta changes are replicated for a given account. Inter-stamp replication is used for (a) keeping a copy of an account’s data in two locations for disaster recovery and (b) migrating an account’s data between stamps. Inter-stamp replication is configured for an account by the location service and performed by the partition layer.

Note: The above content has been summarized from a technical paper titled: “Windows Azure Storage: A Highly Available Cloud Storage Service with Strong Consistency” released by Microsoft.

You can download the PDF here.

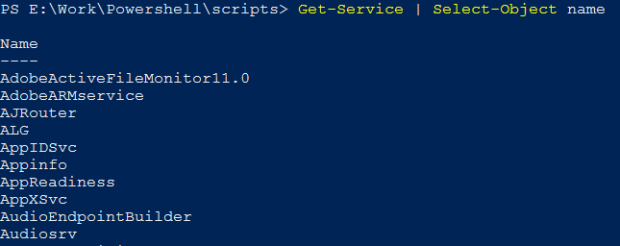

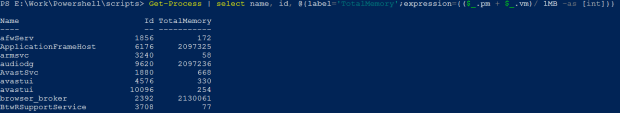

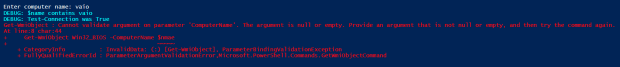

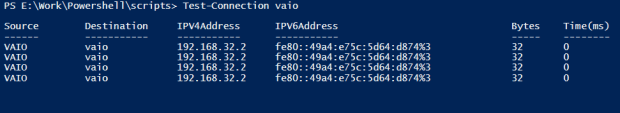

Okay, that is definitely not what I expected. When I used the command with a valid computer name, I get back a table of results, not a True or False value. When I use it with an invalid computer name, I still get an error.

Okay, that is definitely not what I expected. When I used the command with a valid computer name, I get back a table of results, not a True or False value. When I use it with an invalid computer name, I still get an error.